One of my favorite "articles" of all time. I love these types of conversations, in the DB or the Middle Tier? For the vast majority of us, the Database will do just fine. As I've learned more about the data grids and the like, there are trade-offs, which aren't often discussed. One way or another you lose data (say you decide to only UPDATE one time instead of 60). Originally posted on February 20, 2008. Enjoy.

It started innocently enough with this article. I sent it out to about 20 colleagues.

The best line from the article:

"Jerry: "Yeah, databases cause lots of headaches. They crash all the time, corrupt data, etc. Using text files is better."

One of my more recently arrived colleagues (I'll call him Mr. M) replied to everyone with this statement:

"Kind of funny actually, databases are less and less important at the large investment banks, where they basically load everything up into a data grid across a several hundred node cluster. Writing to the db is way too slow."

This started a day long exchange of emails. What follows is the entire thread (up until my last post tonight).

Me:

"I would just argue that they don’t necessarily know how to write to databases. I would however love to see benchmarking done on both methods. Would be an interesting test..."

Mr. M:

"Well, my understanding is they just can’t scale out the db enough. Even something like Oracle RAC won’t work. And outside of the military, these are probably the top 1% of programmers in the world building this stuff."

Me:

"A benchmark would be the only way I would believe it.

If you said the top 1% of database developers tried it and failed, I would be more likely to agree.

My experience is that application developers != database developers. Different type of thinking involved."

Mr. M:

"'A benchmark would be the only way I would believe it.'

Do you need a benchmark before you would believe in-memory retrieval is faster than disk retrieval? Essentially, this is what we’re talking about.

'If you said the top 1% of database developers tried it and failed, I would be more likely to agree. My experience is that application developers != database developers. Different type of thinking involved.'

Why? It’s an issue to do with application performance not simply database performance. Database concerns are a subset of application concerns, essentially a specialization, requiring less encompassing knowledge. ;)

From the article you linked to (http://www.watersonline.com/public/showPage.html?page=432587)

"Better data management is the answer, says Lewis Foti, manager of high-performance computing and grid at The Royal Bank of Scotland (RBS) global banking and markets. "For very large compute arrays, the key issue is data starvation and saturation. This problem requires data grids with high bandwidth and scalable, parallel access,

...

Banks are learning that data management in a distributed grid environment is very different from online transaction processing. "With so many data sources, distribution channels, demands for aggregation and analytics, surges in data volumes and complex dynamics between the flows, we need to manage 'data in motion' and give up the notion that data is somehow stored. It's dynamic, not static," says Michael Di Stefano, vice president and architect for financial services at GemStone Systems

...

There is even some debate over how small a unit of work can be put on today's grids. Di Stefano at GemStone, for example, says, "One client has gone from 200 trades per second in a program trading application to more than 6,000 trades per second. This shows what the technology can do."

Yep, the writing is on the wall. Oracle knows it too.

http://www.google.com/search?hl=en&q=oracle+buys+tangosol&btnG=Google+Search"

Me:

"Good points. If it is in-memory it would be faster. I have not had the pleasure to work on such a system.

I do disagree with the database concerns being a subset of application concerns. The data drives the app. We’re probably getting religious at this point (or am I)."

Mr. M:

"‘The data drives the app.”

Exactly, but who’s to say where the data comes from or in what format? My application data may reside completely in xml files, or maybe I get it from some third party web services a la the en vogue “mashup.” Heck, I may not even need to worry about a database anymore…. http://www.amazon.com/gp/browse.html?node=16427261 The database is only one particular concern of the overall application. And it’s the application that matters. Data is useless if it just sits on a disk somewhere. It’s the ways in which the application lets the users view and manipulate the data that adds value to the business.

Yep, definitely a different type of thinking between application developers and database developers."

Me:

"Definitely religious now.

Applications come and go, data stays the same. Think Green Screens, EJBs, Ruby…what’s next?"

Mr. M:

"'Applications come and go'

Exactly. Businesses are not static, nor are the markets they compete in. Changing applications are a function of changing business processes and changing markets.

'data stays the same.'

Nonsense. Otherwise UPDATE would not be an SQL reserved word. If you mean database technology stays the same, well, I’m more inclined to agree with that.

'Think Green Screens, EJBs, Ruby...what’s next?'

Whatever comes along to let the business more effectively respond to current market realities. Application platforms have evolved much faster than database platforms have. They’ve had to, their sphere of operation is much broader than that of databases, this is only natural, they deal with much broader concerns than do databases. Databases in the internet era function in essentially the same role they did in the era of dumb terminals. Clearly application platforms have evolved orders of magnitude more. Hence the statement, database concerns are a subset of application concerns.

Here’s a simple test….if I take some business application and I’m forced to throw away one or the other, either the database or the appl- wait a second, it doesn’t even make sense to finish it, does it? The business can live without the database. I could do all kinds of things with the data, I could stick it anywhere. The business can’t live without the application though. Another way to look at is, what do the business users look at, test, approve, and use? The database? Of course not, they look at the application. They could care less whether the data sits on disk in an RDBMS, xml, or flat files."

Me:

"We obviously violently disagree.

Without the database (and I use database and data interchangebly), the business could no longer function. The app is meaningless. How would you contact your customer? You couldn’t find it.

'Exactly. Businesses are not static, nor are the markets they compete in. Changing applications are a function of changing business processes and changing markets.'

Poorly designed applications…that is all."

A Feisty Colleague:

"Using data and database interchangeably is incorrect. A database is a mechanism for data storage. XML data sets and flat files are mechanisms for data storage, too. So is a file cabinet, because, the data doesn’t have to be electronic, it could be … gasp! … on paper, and the application to use that data would be hands for holding the paper and a pencil to update and add data to the page."

Me:

"No it isn’t. I take into account xml files, flat files, web services (but not paper, unless it’s scanned) and all that. It would be consumed by the database and then accessed by the application via SQL.

(that’s for Mr. M and the feisty one)"

At which point someone forwarded the home page for Oracle's TimesTen In-Memory Database.

Me:

"A database on/in the mid-tier...Perfect!"

Mr. M:

"Implicit acknowledgment that disk IO operations that come with traditional database access simply can’t match the performance of in-memory data access (a point which you previously were unconvinced of but now seem perfectly accepting of the idea once you see it’s got Oracle’s imprimatur on it).

Of course, why any application developer would want to program against an SQL interface if they weren’t forced to is beyond me. It is orthogonal to the programming model of most application platform languages.

Surely Oracle recognize this fact too or they wouldn’t be buying Tangosol and other data grid technologies. Of course, most of those products are far more technically advanced than TimesTen or anything Oracle has in that space.

Incidentally, it’s illustrative to note that Coherence and other products like it were for the most part designed and built by application programmers. The development of all these products is pretty much driven by the needs of the large investment banks on Wall Street. These trading applications simply had too many concurrent transactions to use an RDBMS (a problem quite a number of public domains now share, most famously google.com, nope, no RDBMS there, yet miraculously there is still data). The database just simply would not scale to such a degree. So the application developers, by necessity, came up with an alternate solution that did work, a fully transactional cache of data replicated across a cluster with node numbers in the thousands, and no relational model whatsoever to speak of. A perfect example of how database concerns are only one, sometimes small, concern amongst many that application developers must be aware of and ready to solve."

Me:

"Like you said initially, the top 1%.

Many of us will never touch a system like this.

I will certainly concede that it is faster (still would love to see benchmarking though), but that still leaves 99% of the applications out there that do not require that kind of performance."

Me (again):

"And don’t forget, I use data and database interchangeably. Applications are nothing without the data right?

As to the object/relational impedance mismatch...well, more people that don’t know how to work in sets. Looping is what they understand. I understand the application side more than you seem to give me credit for.

I’m not saying applications aren’t important, they are. Data (databases) and applications go hand in hand. If the application went away though, they could still access their data via SELECT statements (yes, via an application client tool), however painful that may be. Applications make retrieving data that much easier for our users.

If anyone wants to unsubscribe from this mailing list, just let us know. This is fun for me (I’m guessing Mr. M too)."

Needless to say it was a fun day. It didn't get [too] personal. More than anything I'm happy to have an equally passionate colleague.

Besides, he claims he was just fracking around with me. ;)

Monday, July 6, 2009

Sunday, July 5, 2009

SQL: SYS_CONTEXT

In my experience so far, I've seen very few places where SYS_CONTEXT is used. It is typically used in conjunction with CONTEXTs and also Virtual Private Database (VPD).

Oracle has a built in namespace called USERENV and their is a wealth of information you can retrieve from there. I wrote up a quick view to demonstrate the use of SYS_CONTEXT:

You can create a context for just about anything you want. I've used them in the past along with table based security in a stateless environment. In essence, I create a record in a table with a SESSION_ID, I then store that in the context (global variable) and I use that to reconnect to the database each time.

I promise to have an example of using it in the near future.

Oracle has a built in namespace called USERENV and their is a wealth of information you can retrieve from there. I wrote up a quick view to demonstrate the use of SYS_CONTEXT:

SELECTWhich yields the following:

SYS_CONTEXT( 'USERENV', 'ACTION' ) action,

SYS_CONTEXT( 'USERENV', 'AUTHENTICATION_METHOD' ) authentication_method,

SYS_CONTEXT( 'USERENV', 'BG_JOB_ID' ) bg_job_id,

SYS_CONTEXT( 'USERENV', 'CLIENT_IDENTIFIER' ) client_identifier,

SYS_CONTEXT( 'USERENV', 'CLIENT_INFO' ) client_info,

SYS_CONTEXT( 'USERENV', 'CURRENT_SCHEMAID' ) current_schemaid,

SYS_CONTEXT( 'USERENV', 'CURRENT_SCHEMA' ) current_schema,

SYS_CONTEXT( 'USERENV', 'CURRENT_USER' ) current_user,

SYS_CONTEXT( 'USERENV', 'DB_DOMAIN' ) db_domain,

SYS_CONTEXT( 'USERENV', 'DB_NAME' ) db_name,

SYS_CONTEXT( 'USERENV', 'DB_UNIQUE_NAME' ) db_unique_name,

SYS_CONTEXT( 'USERENV', 'HOST' ) host,

SYS_CONTEXT( 'USERENV', 'INSTANCE' ) instance,

SYS_CONTEXT( 'USERENV', 'INSTANCE_NAME' ) instance_name,

SYS_CONTEXT( 'USERENV', 'IP_ADDRESS' ) ip_address,

SYS_CONTEXT( 'USERENV', 'ISDBA' ) isdba,

SYS_CONTEXT( 'USERENV', 'LANG' ) lang,

SYS_CONTEXT( 'USERENV', 'LANGUAGE' ) language,

SYS_CONTEXT( 'USERENV', 'NETWORK_PROTOCOL' ) network_protocol,

SYS_CONTEXT( 'USERENV', 'NLS_CALENDAR' ) nls_calendar,

SYS_CONTEXT( 'USERENV', 'NLS_CURRENCY' ) nls_currency,

SYS_CONTEXT( 'USERENV', 'OS_USER' ) os_user,

SYS_CONTEXT( 'USERENV', 'SERVER_HOST' ) server_host,

SYS_CONTEXT( 'USERENV', 'TERMINAL' ) terminal

FROM dual

ACTION: NULLBasically, these are global variables that Oracle stores on the current session/user.

AUTHENTICATION_METHOD: PASSWORD

BG_JOB_ID: NULL

CLIENT_IDENTIFIER: NULL

CLIENT_INFO: NULL

CURRENT_SCHEMAID: 88

CURRENT_SCHEMA: CJUSTICE

CURRENT_USER: CJUSTICE

DB_DOMAIN: NULL

DB_NAME: testing

DB_UNIQUE_NAME: testing

HOST: cdj-laptop

INSTANCE: 1

INSTANCE_NAME: TESTING

IP_ADDRESS: 192.168.1.4

ISDBA: FALSE

LANG: US

LANGUAGE: AMERICAN_AMERICA.WE8MSWIN1252

NETWORK_PROTOCOL: tcp

NLS_CALENDAR: GREGORIAN

NLS_CURRENCY: $

OS_USER: chet

SERVER_HOST: oracledb

TERMINAL: UNKNOWN

You can create a context for just about anything you want. I've used them in the past along with table based security in a stateless environment. In essence, I create a record in a table with a SESSION_ID, I then store that in the context (global variable) and I use that to reconnect to the database each time.

I promise to have an example of using it in the near future.

Thursday, July 2, 2009

Learning From Failure

I think I began reading The Daily WTF about 4 years ago. I don't miss or skip a post.

I remember this one time, probably about the time I began reading the site, I had to automate a process to move files from one server to another. Originally, I had tried to create a network drive (yes, it was Windows) on the database server so that I could just use a simple Java class to read the directory and then load the files via DBMS_LOB.

I had ultimately decided on a service, but I didn't know how to write one for Windows. Then I found the Java Service Wrapper which would allow me to write the guts in Java and then install it on Windows as a service. Perfect.

Now that I had that settled, I had to figure out how to detect when a file was read to be moved. I decided on a looping mechanism, to check every minute or so, to see if a file was available. It looked something like this (I'm a tad rusty, so bear with me):

Then I got a call from the server admin.

SA: "You've got something running on this machine that's spiking the CPU."

Me: "Really? I can't think of anything."

SA: "Well, take a look and see if you can find anything."

Me: "10-4"

Sure enough, go into Task Manager and there's java.exe hogging up all the CPU. WTF?

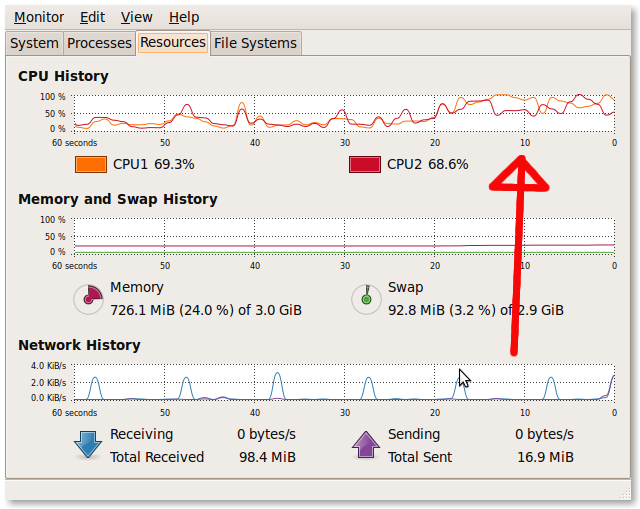

I just ran this on my machine and you can see the CPU start to spike:

Off to Google to see what I can find. During my research, I found mention of a small method called Thread.sleep(long). So I replaced my brilliant add 1000000 to the current date with Thread.sleep(6000), or whatever equals 60 seconds. Problem solved.

A short time later I read a post on The Daily WTF about the same exact problem (I can't find the exact post for the life of me). The "victim" did the exact same thing I did. The solution was the Thread.sleep() call.

Me = FAIL

One more short example.

Over beers, a friend (see last entry) of mine and I were discussing the failure of the North Korean missile launch. I said, "Idiots." He said, "They're going to learn a lot more from that failure than they would have had it suceeded."

Spoken like a true engineer I guess.

The point? You learn by trying. You learn my doing. You learn by failing. Whether you realize it or not, you learn. (Well, some people don't, but that's another post). If you're reading here though, that probably means you have a passion for what you do. That means that you are trying. You are learning (maybe not here specifically ;).

Here's to trying and failing and hopefully trying and succeeding.

I remember this one time, probably about the time I began reading the site, I had to automate a process to move files from one server to another. Originally, I had tried to create a network drive (yes, it was Windows) on the database server so that I could just use a simple Java class to read the directory and then load the files via DBMS_LOB.

I had ultimately decided on a service, but I didn't know how to write one for Windows. Then I found the Java Service Wrapper which would allow me to write the guts in Java and then install it on Windows as a service. Perfect.

Now that I had that settled, I had to figure out how to detect when a file was read to be moved. I decided on a looping mechanism, to check every minute or so, to see if a file was available. It looked something like this (I'm a tad rusty, so bear with me):

package project1;It wasn't pretty, but it seemed to work.

import java.util.Date;

public class Class1

{

public static void main(String[] args)

{

Class1 class1 = new Class1();

Date d = new Date();

long l = d.getTime() + 1000000000;

String s = String.valueOf( l );

for ( int i = 0; i < l; i++ )

{

//some sort of MOD "wait" here, then check for the file

}

}

}

Then I got a call from the server admin.

SA: "You've got something running on this machine that's spiking the CPU."

Me: "Really? I can't think of anything."

SA: "Well, take a look and see if you can find anything."

Me: "10-4"

Sure enough, go into Task Manager and there's java.exe hogging up all the CPU. WTF?

I just ran this on my machine and you can see the CPU start to spike:

|

Off to Google to see what I can find. During my research, I found mention of a small method called Thread.sleep(long). So I replaced my brilliant add 1000000 to the current date with Thread.sleep(6000), or whatever equals 60 seconds. Problem solved.

A short time later I read a post on The Daily WTF about the same exact problem (I can't find the exact post for the life of me). The "victim" did the exact same thing I did. The solution was the Thread.sleep() call.

Me = FAIL

One more short example.

Over beers, a friend (see last entry) of mine and I were discussing the failure of the North Korean missile launch. I said, "Idiots." He said, "They're going to learn a lot more from that failure than they would have had it suceeded."

Spoken like a true engineer I guess.

The point? You learn by trying. You learn my doing. You learn by failing. Whether you realize it or not, you learn. (Well, some people don't, but that's another post). If you're reading here though, that probably means you have a passion for what you do. That means that you are trying. You are learning (maybe not here specifically ;).

Here's to trying and failing and hopefully trying and succeeding.

Monday, June 29, 2009

Constraints: ENABLE NOVALIDATE

Yesterday while perusing the Concepts Guide, I stumbled across the ENABLE NOVALIDATE keywords for the definition of a Foreign Key constraint. I've always known it was there, just never used it, or thought to use it.

It can be a big benefit while working on a legacy system.

Suppose you have a table, T_CHILD:

This is just another reminder why you must read the Concepts Guide. By the way, I found the quote I was looking for from Mr. Kyte (h/t @boneist)

It can be a big benefit while working on a legacy system.

Suppose you have a table, T_CHILD:

CREATE TABLE t_childThis table has been around for quite some time. You decide that you would like to constrain the values in the SOON_TO_BE_PARENT_ID column. First, here's the data that exists:

(

child_id NUMBER(10)

CONSTRAINT pk_childid PRIMARY KEY,

soon_to_be_parent_id NUMBER(10)

);

INSERT INTO t_child

( child_id,

soon_to_be_parent_id )

SELECT

rownum,

TRUNC( dbms_random.value( -9999, -1 ) )

FROM dual

CONNECT BY LEVEL <= 10;

CJUSTICE@TESTING>SELECT * FROM t_child;Now I'll create a table that will contain the values I want to constraint SOON_TO_BE_PARENT_ID to, call it a lookup or reference table.

CHILD_ID SOON_TO_BE_PARENT_ID

---------- --------------------

1 -5560

2 -1822

3 -2499

4 -7039

5 -8718

6 -1019

7 -9997

8 -9553

9 -4477

10 -1458

CREATE TABLE t_parentI'll populate it with some data:

(

parent_id NUMBER(10)

CONSTRAINT pk_parentid PRIMARY KEY

);

INSERT INTO t_parent( parent_id )Now I'll add the constraint that references the PARENT_ID column of T_PARENT

SELECT rownum

FROM dual

CONNECT BY LEVEL <= 10;

CJUSTICE@TESTING>SELECT * FROM T_PARENT;

PARENT_ID

----------

1

2

3

4

5

6

7

8

9

10

10 rows selected.

ALTER TABLE t_childand rename the column to PARENT_ID:

ADD CONSTRAINT fk_parentid

FOREIGN KEY ( soon_to_be_parent_id )

REFERENCES t_parent( parent_id )

ENABLE

NOVALIDATE;

ALTER TABLE t_child RENAME COLUMN soon_to_be_parent_id TO parent_id;What will this do? I should no longer be able to enter a value into T_CHILD.PARENT_ID that does not exist in T_PARENT, but it will ignore anything that already exists.

INSERT INTO t_childPerfect! Now I'll add a value that does exist in T_PARENT.

( child_id,

parent_id )

VALUES

( 11,

11 );

INSERT INTO t_child

*

ERROR at line 1:

ORA-02291: integrity constraint (CJUSTICE.FK_PARENTID) violated - parent key not found

INSERT INTO t_childWin!

( child_id,

parent_id )

VALUES

( 11,

10 );

1 row created.

This is just another reminder why you must read the Concepts Guide. By the way, I found the quote I was looking for from Mr. Kyte (h/t @boneist)

"...if you simply read the Concepts Guide...and retain just 10%..., you’ll already know 90% more than most people do"

Subscribe to:

Posts (Atom)